Performance Considerations

Developers and especially JavaScript developers can be extremely indecisive. “I’ve found multiple ways to achieve the same thing but which one is the fastest, the most appropriate? Which one is the optimal one?” Most of us would relate to such questions and although there is no definitive answer to which method you should choose, there are ways to make your struggle to reach a verdict a lot easier. One of the factors that will greatly affect your choice is the overall speed of the program. That’s not the only factor as others come into play, like cleanness, maintainability and speed of development but speed and efficiency are very important nonetheless.

How to measure time in JS

In the past the most prevalent method to take time measurements was new Date().getTime. However that approach was quickly abandoned as more features were added to the language. Console.time provided a nice alternative. Console.time is pretty simple. You just specify a string name for the timer and call the method to start counting. After the code you want to check has finished executing you call the method again providing the same name as the first and only argument:

console.time("mycode");

//code that takes some time

console.time("mycode");

The result gets printed in the console like this:

mycode: 355ms

Unfortunately Console.time (as you can read in its MDN page) is no longer standard and it’s use is therefore heavily discouraged.

Date().getTime is deprecated, console.time is non-standard, so how can I measure my code’s execution time?

When it comes to raw performance measurement in JavaScript performance.now is the way to go. The Performance interface contains various performance related methods (duh!) you can use to take measurements for time-related stuff. Now() is the interface’s method you will probably use the most and this method is the one described in this post.

.now()

The method’s functionality is pretty simple and straight-forward.

The Performance.now() method returns a DOMHighResTimeStamp, measured in milliseconds, accurate to one thousandth of a millisecond. (thanks MDN <https://developer.mozilla.org/en-US/docs/Web/API/Performance/now> ).

let timeBefore = performance.now()

someMethod();

let timeAfter = performance.now()

let diff = timeAfter - timeBefore;

console.log("Diff: " + diff);

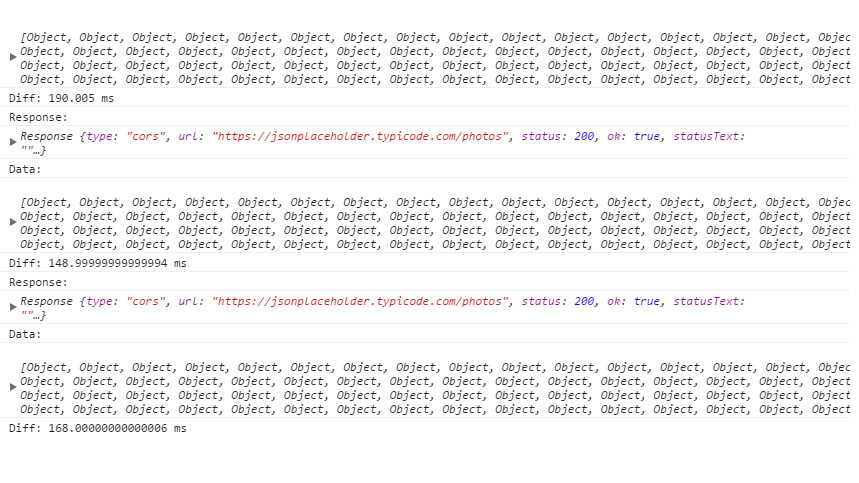

To demonstrate the use of performance.now in a more real-life example let’s count how much time it takes to fetch 5000 photos from JSONPlaceholder (https://jsonplaceholder.typicode.com/)

We declare timeBefore right before calling fetch and timeAfter right after the data has been parsed and printed to the console window. The result gets also printed in the console.

You can see the results of three consecutive executions in the next screenshot (Google Chrome):